Empirical Evaluations of Non-Experimental Methods: Theory, Application and Synthesis

What We Do

This project has two goals for improving the theory, application, and synthesis of within-study comparison designs for evaluating non-experimental methods: to establish a coherent framework for the design, implementation, and analysis of within-study comparisons for evaluating non-experimental methods and to establish an infrastructure for conducting an ongoing quantitative synthesis of WSC results.

Project Info

Funding Source: National Science Foundation

PROJECT SUMMARY

Despite recent emphasis on the use of randomized control trials (RCTs) for evaluating education interventions, in most areas of education research, observational methods remain the dominant approach for assessing program effects. Given the widespread use of non-experimental approaches for assessing the causal impact of interventions in program evaluations, there is a strong need to identify which non-experimental (NE) methods can produce credible impact estimates in field settings. Over the last three decades, the within-study comparison (WSC) design has emerged as a method to evaluate the performance of non-experimental methods in field settings. In the traditional WSC design, treatment effects from a randomized experiment (RE) are compared to those produced by a non-experimental (NE) approach that shares at least the same target population and intervention. The goals of the WSC are to determine whether the non-experiment can replicate results from a high-quality randomized experiment (which provides the causal benchmark estimate), and the contexts and conditions under which these methods work or do not work in practice.

This project has two goals for improving the theory, application, and synthesis of within-study comparison designs for evaluating non-experimental methods.

The first is to establish a coherent framework for the design, implementation, and analysis of within-study comparisons for evaluating non-experimental methods. The objectives include: explicating required design components of WSCs and common threats to validity; developing resources to aid in planning and implementing of WSCs, such as tools for calculating statistical power and a WSC research protocol; and examining methods for assessing correspondence in experimental and non-experimental results.

The second research aim is to establish an infrastructure for conducting an ongoing quantitative synthesis of WSC results. To this end, the project will create a WSC database of all existing WSC results, and will conduct a meta-analysis of all WSC results. The goal of the reviews is to identify contexts and conditions under which non-experimental methods are likely (or not likely) to produce causal impact estimates. Moreover, the ongoing data collection of WSC results will allow us to continue exploratory analyses of WSC results, as well as plan future WSCs where information about non-experimental methods may be sparse.

Upcoming Special Issue on Within-Study Comparison Designs in Evaluation Review

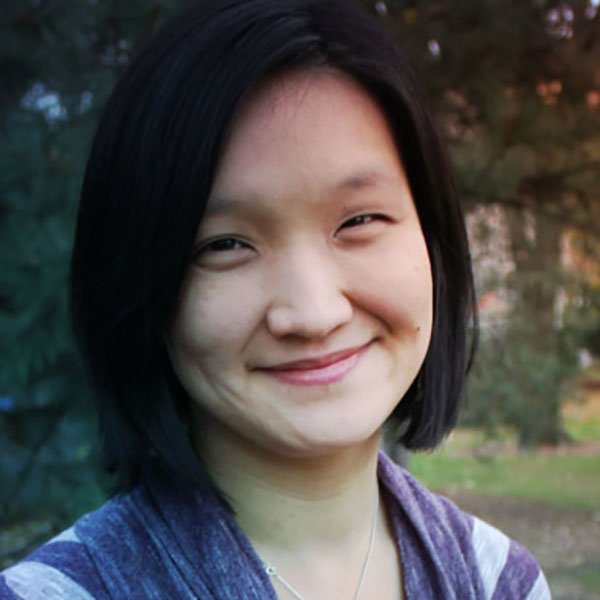

Project Team

External partners: Peter M. Steiner, Co-PI (University of Wisconsin, Madison)

SELECT PUBLICATIONS

Wong, V.C. & Steiner, P.M. (in progress). Research Designs for Empirical Evaluations of Non-Experimental Methods in Field Settings.

Steiner, P.M. & Wong, V.C. (in progress). Assessing Correspondence of Benchmark and Non-experimental Results in Within-Study Comparison Designs.

Wong, V.C., Valentine, J., Miller-Bain, K. (under review). Covariate Selection in Education Observation Studies: A Review of Results from Within-study Comparisons.

Hallberg, K., Wong, V.C., Cook, T.D. (under review). Evaluating Methods for Selecting School-Level Comparisons in Quasi-Experimental Designs: Results from a Within-Study Comparison.